Generating New Models

The Model Generator dialog provides options for generating new Deep Learning and Machine Learning (Classical) models for the current Segmentation Wizard session. You should note that the appearance of the dialog will change for the selected model type — Deep Learning or Machine Learning (Classical).

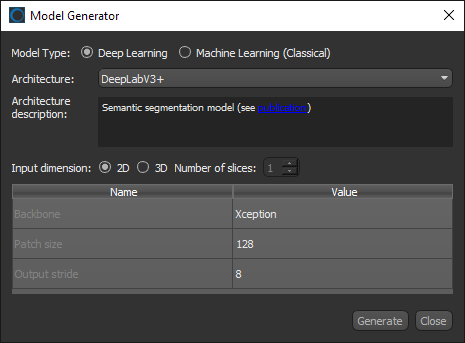

The Model Generator dialog provides options — architectures, input dimensions, and a select number of editable parameters — for generating new Deep Learning models.

Click the New button on the Models tab to open the Model Generator dialog, shown below. You can then select Deep Learning as the Model Type to open the dialog and view the options for generating new Deep Learning models.

Model Generator dialog for Deep Learning model types

|

|

Description |

|---|---|

|

Model Type |

Lets you choose a model type — Deep Learning or Machine Learning (Classical). Note Refer to the topic Machine Learning (Classical) Model Types for information about the algorithms and feature presets for Machine Learning (Classical) models. |

|

Architecture |

Lists the default Deep Learning model architectures supplied with the Segmentation Wizard. |

|

Provides a short description of the selected architecture and a link for further information. Autoencoder… Generic autoencoder. Additional information about Autoencoder is available online at: https://en.wikipedia.org/wiki/Autoencoder. DeepLabV3+… Semantic segmentation model. Refer to Encoder-Decoder with Atrous Separable Convolution for Semantic Image Segmentation for additional information about DeepLabV3+ (also available online at: https://arxiv.org/pdf/1802.02611.pdf). FC-DenseNet… Semantic segmentation model. Refer to The One Hundred Layers Tiramisu: Fully Convolutional DenseNets for Semantic Segmentation for additional information about FC-DenseNet (also available online at: https://arxiv.org/pdf/1611.09326.pdf). PSPNet… Semantic segmentation model. Refer to Pyramid Scene Parsing Network for additional information about PSPNet (also available online at: https://arxiv.org/pdf/1612.01105.pdf). Sensor3D… Semantic segmentation model using convolution LSTM. Refer to Deep Sequential Segmentation of Organs in Volumetric Medical Scans for additional information about Sensor3D (also available online at: https://arxiv.org/pdf/1807.02437.pdf). U-Net… All purpose model designed especially for medical image segmentation. Refer to U-Net: Convolutional Networks for Biomedical Image Segmentation for additional information about U-Net (also available online at: https://arxiv.org/pdf/1505.04597.pdf). U-Net 3D… 3D implementation of U-Net. Refer to U-Net: Convolutional Networks for Biomedical Image Segmentation for additional information about U-Net (also available online at: https://arxiv.org/pdf/1505.04597.pdf). Note Currently, only U-Net 3D is a fully 3D model that uses 3D convolutions. The number of input slices for this model is determined by the input size, which must be cubic. For example, 32x32x32. U-Net uses 2D convolutions, but can take 3D input patches for which you can choose the number of slices. You should also note that in some cases, 3D models can be more reliable for segmentation tasks. |

|

|

Lets you choose to train slice-by-slice (2D) or to allow training over multiple slices (3D).

Note Not all data is suitable for training in 3D. For example, in cases in which features are not consistent over multiple slices. |

|

|

Parameters |

Lists the hyperparameters associated with the selected architecture and the default values for each. Note Refer to the documents referenced in the Architecture Description box for information about the implemented architectures and their associated parameters. |

A limited number of the parameters for Deep Learning model architectures are available for editing in the Segmentation Wizard. These are listed below for the default models that are available.

You should note that Dragonfly's Deep Learning Tool provides more control for editing Deep Learning model architectures. You can also edit the training parameters of a Deep Learning model after it is generated (see Training Parameters for Deep Learning Models).

|

|

Description |

|---|---|

|

Autoencoder |

The following hyperparameters can be adjusted for Autoencoder architectures: Initial filter count… Filter count at the first convolutional layer. Kernel size… Convolutional filters kernel size. Pooling size… Pooling window size. |

|

DeepLabV3+ |

The following hyperparameters can be adjusted for DeepLabV3+ architectures: Backbone… Backbone to use — Xception or MobileNetV2. Patch size… Fixed size of the input patches. Output stride… Ration of the image size to the encoder output size. |

|

FC-DenseNet |

The following hyperparameters can be adjusted for FC-DenseNet architectures: Model type… Model variation to be generated — FC-DenseNet56, FC-DenseNet67, or FC-DenseNet103. |

|

PSPNet |

The following hyperparameters can be adjusted for Autoencoder architectures: Backbone… Backbone to use — ResNet50 or ResNet101. Patch size… Fixed size of the input patches. |

|

Sensor3D |

The following hyperparameters can be adjusted for Sensor3D architectures: Depth level… Depth of the network, as determined by the number of pooling layers. Initial filter count… Filter count at the first convolution layer. |

|

U-Net |

The following hyperparameters can be adjusted for U-Net architectures: Depth level… Depth of the network, as determined by the number of pooling layers. Initial filter count… Filter count at the first convolution layer. |

|

U-Net 3D |

The following hyperparameters can be adjusted for U-Net 3D architectures: Topology… The topology of the model. Initial filter count… Filter count at the first convolution layer. Use batch normalization… Determines if batch normalization will be applied — True or False. |

Refer to the following instructions for information about generating new Deep Learning models in the Model Generator dialog. You can also create and generate new models in the Model Generation Strategy dialog (see Model Generation Strategies).

- Click the New

button on the Models tab.

button on the Models tab.

The Model Generator dialog appears.

- Choose Deep Learning as the Model Type.

- Choose the required architecture in the Architecture drop-down menu, as shown below.

Note Refer to Architecture Description for information about the different architectures that are available and references to the literature.

- Choose the required Input dimension — 2D or 3D.

Note Refer to Input Dimension for information about selecting an input dimension.

- Modify the hyperparameters for the selected architecture, optional.

Note Refer to Editable Parameters for Deep Learning Model Architectures for information about resetting the parameters for a specific architecture.

- Click Generate.

After processing is complete, the new model appears in the Models list.

- Do one of the following:

- Generate additional models.

- Click the Close button to close the Model Generator dialog.

Note You can edit the training parameters of a Deep Learning model after it is generated (see Training Parameters for Deep Learning Models).

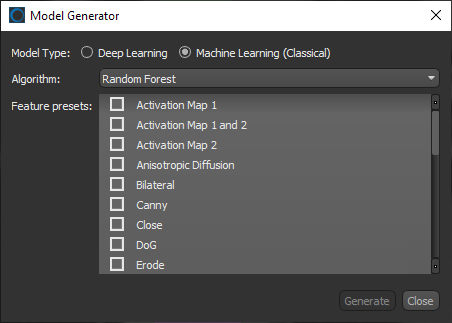

The Model Generator dialog provides options — Algorithms and Feature presets — for generating new Machine Learning (Classical) models.

Click the New button on the Models tab or in the Model Generation Strategy dialog to open the Model Generator dialog, shown below. You can then select Machine Learning (Classical) as the Model Type to open the dialog and view the options for generating new Machine Learning (Classical) models.

Model Generator dialog for Machine Learning (Classical) model types

|

|

Description |

|---|---|

|

Model Type |

Lets you choose a model type — Deep Learning or Machine Learning. Note Refer to the topic Deep Learning Model Types for information about the architectures and parameters for Deep Learning models. |

|

The available machine learning algorithms are accessible through the Algorithm drop-down menu. You should note that each algorithm will react differently to the same inputs and that trying different algorithms will most likely highlight the best choice for a given dataset. AdaBoost… An AdaBoost classifier is a meta-estimator that begins by fitting a classifier on the original dataset and then fits additional copies of the classifier on the same dataset but where the weights of incorrectly classified instances are adjusted such that subsequent classifiers focus more on difficult cases. Ref:http://scikit-learn.org/stable/modules/generated/sklearn.ensemble.AdaBoostClassifier.html Bagging… A Bagging classifier is an ensemble meta-estimator that fits base classifiers each on random subsets of the original dataset and then aggregate their individual predictions (either by voting or by averaging) to form a final prediction. Such a meta-estimator can typically be used as a way to reduce the variance of a black-box estimator (e.g., a decision tree), by introducing randomization into its construction procedure and then making an ensemble out of it. Ref:http://scikit-learn.org/stable/modules/generated/sklearn.ensemble.BaggingClassifier.html Extra Trees… This classifier implements a meta estimator that fits a number of randomized decision trees (also known as extra-trees) on various sub-samples of the dataset and uses averaging to improve the predictive accuracy and control over-fitting. Ref:http://scikit-learn.org/stable/modules/generated/sklearn.ensemble.ExtraTreesClassifier.html Gradient Boosting… Builds an additive model in a forward stage-wise fashion; it allows for the optimization of arbitrary differentiable loss functions. In each stage n_classes_ regression trees are fit on the negative gradient of the binomial or multinomial deviance loss function. Binary classification is a special case where only a single regression tree is induced. Ref:http://scikit-learn.org/stable/modules/generated/sklearn.ensemble.GradientBoostingClassifier.html Random Forest… A Random Forest is a meta estimator that fits a number of decision tree classifiers on various sub-samples of the dataset and uses averaging to improve the predictive accuracy and control over-fitting. The sub-sample size is always the same as the original input sample size but the samples are drawn with replacement if Ref:http://scikit-learn.org/stable/modules/generated/sklearn.ensemble.RandomForestClassifier.html |

|

|

The dataset features presets are a stack of filters that are applied to a dataset to extract information to train a classifier (see Default Dataset Features Presets for information about the available filters). Note Each filter in a dataset feature preset is applied separately during training and classification. The filters in a preset are not applied in a cascade or as a composite. |

Refer to the following instructions for information about generating new Machine Learning (Classical) models in the Model Generator dialog. You can also create and generate new models in the Model Generation Strategy dialog (see Model Generation Strategies).

- Click the New

button on the Models tab.

button on the Models tab.

The Model Generator dialog appears.

- Choose Machine Learning (Classical) as the Model Type.

- Choose an algorithm in the Algorithm drop-down menu, as shown below.

Note Refer to Algorithm for information about the different algorithms that are available and references to the literature.

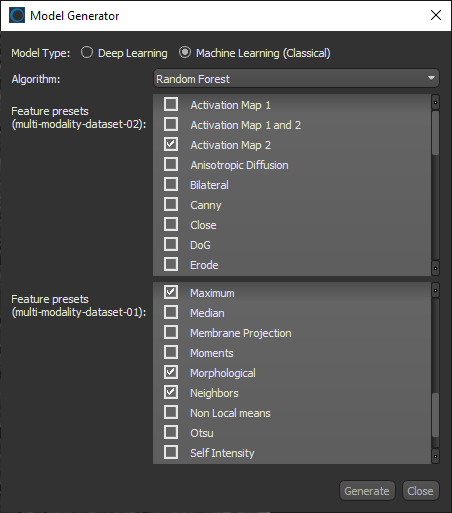

- Choose the feature presets that you want to use to train the classifier (see Feature Presets).

You should note that you can choose different feature trees for each input dataset when you are working with multi-modality models, as shown in the following screen capture.

- Click Generate.

After processing is complete, the new model appears in the Models list.

- Do one of the following:

- Generate additional models.

- Click the Close button to close the Model Generator dialog.